Inside this Article:

- JavaScript SEO & Google Crawlability Issues

- 1. Buttons, Links, and Other Features Don’t Work Without JavaScript

- 2. Infinite Scrolling and Pagination Issues

- 3. Missing or Hidden Content

- 4. Internal Link Errors

- Common JavaScript SEO Site Speed Issues

- 5. Heavy or Unused JS Files

- 6. Site Speed Issues: Render-Blocking Resources

- Only the Tip of the Iceberg for JavaScript SEO

From crawling and indexation issues to poor site speed performance, these common JavaScript SEO issues can impact the visibility and success of your website in search results.

Over the past decade, JavaScript has gained huge popularity and usage in web development.

This has resulted in more websites utilizing JavaScript frameworks (such as React) to execute functionalities and interactive features on websites, like animation and navigation enhancements.

As developers focus on creating more JavaScript-heavy websites to engage users, a number of SEO challenges are bound to arise.

One central issue that presents itself time and time again is indexation.

JavaScript SEO & Google Crawlability Issues

Although other search engines still struggle, Google has come a long way in its ability to crawl and index websites with JavaScript.

In the past, Google’s search engine crawlers often had difficulty indexing websites utilizing JavaScript since it was not able to properly read and render the code.

As Google crawlers progressed to better read JavaScript, all website pages with JavaScript entered a queue for website rendering, which often led to delayed indexation and ranking — sometimes up to even a week’s time.

However, since 2019, the evergreen Googlebot is advanced enough to produce little to no delay during the rendering queue – anywhere from 5 seconds to minutes.

Despite Googlebot’s advancements, websites heavily built with JavaScript still pose challenges in relation to site speed performance and website rendering, both of which heavily impact SEO performance.

Here are a few of the common SEO issues you’ll find in websites with JavaScript:

1. Buttons, Links, and Other Features Don’t Work Without JavaScript

JavaScript can make a website much more aesthetically pleasing and engaging to users.

But it can also cause problems for a search engine crawler if it’s unable to interact with the page, making it difficult to index content properly.

A number of culprits can be pointed at once JavaScript is disabled in a web browser.

If you’re using links, buttons, or other features that trigger JavaScript events, then you may be unintentionally excluding users who have JavaScript disabled or unavailable in their browser.

For example, if you have a JavaScript-enabled carousel button that scrolls to a picture when the user clicks on it, users without JavaScript won’t be able to click the button or see the content. This not only creates poor user experience, but search engine crawlers are also unable to click these buttons.

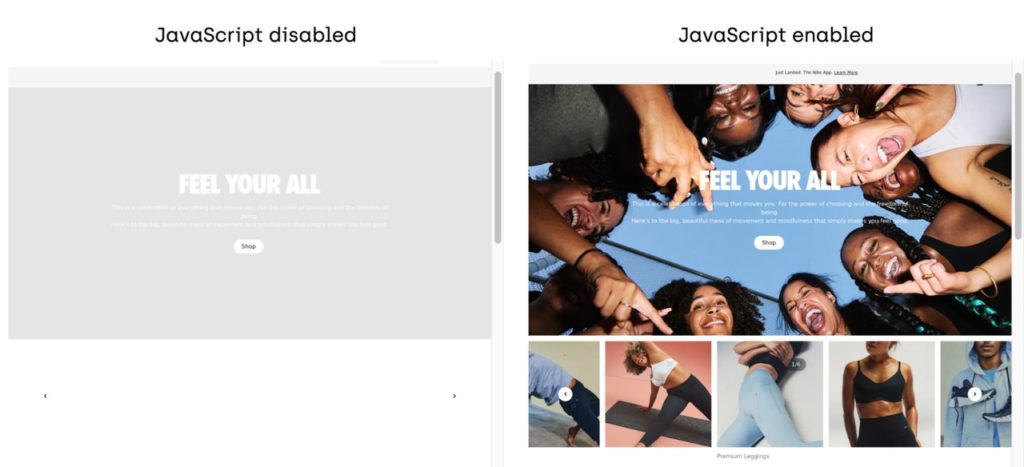

source: https://www.nike.com/

How to Make Your JavaScript SEO-Friendly

- Disable JavaScript on your browser to detect any that exists on the site. Tools such as Web Developer or WWJD from Onely allow you to view your site without JavaScript enabled.

- WWJD will show you side-by-side screenshots of your site with and without JavaScript enabled.

source: https://www.onely.com/tools/wwjd/

source: https://www.nike.com/

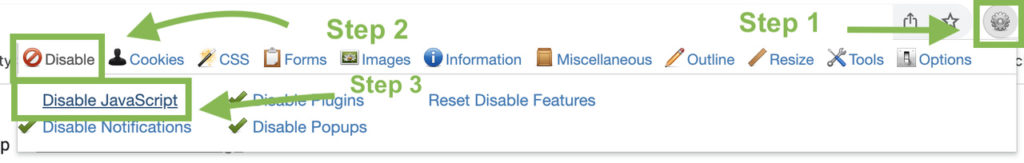

- Web Developer is a web extension that can be installed on your Chrome browser. Here is how to use it:

- Step 1: Once installed, click on the extension.

- Step 2: Click the “Disable” tab.

- Step 3: Click “Disable JavaScript”.

- You’ll need to refresh the page to see the tool implemented.

- You should also see a green checkmark next to “Disable JavaScript”.

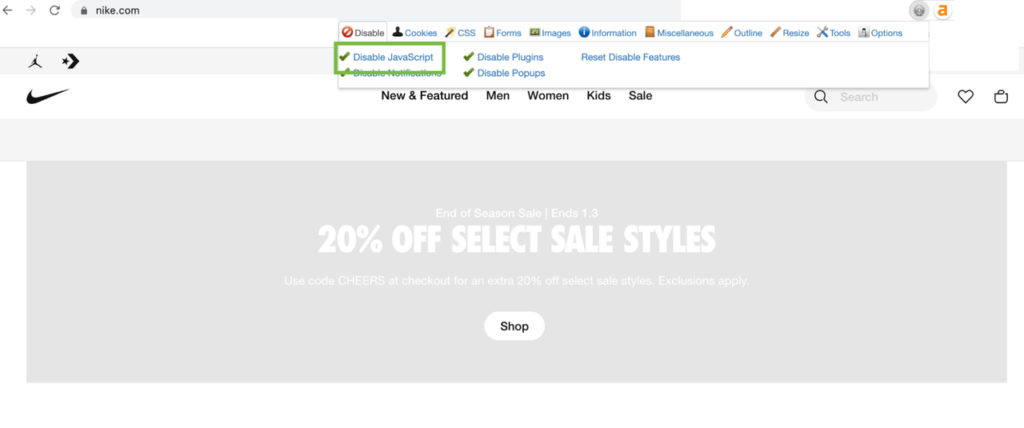

source: https://www.nike.com/

source: https://www.nike.com/

- Whether it’s a broken button or feature on your page, consider alternative solutions in order to display the enhancements without JavaScript. This could includes solutions such as:

- JavaScript polyfills

- Alternative version of the site

- Server-side rendering to send as a complete HTML page

- As a long-term strategy, Include progressive enhancement as part of your web development process in order to keep your content and functionality accessible without JavaScript.

- Progressive enhancement focuses on the concept of building a website by first prioritizing the most basic features and then adding more complex features on top, allowing all visitors, regardless of their device or technical capabilities, access to the content.

2. Infinite Scrolling and Pagination Issues

Another example of a JavaScript SEO issue appears with infinite-scrolling features.

When JavaScript is disabled for an infinite-scrolling feature, the site may be missing paginated links (or may have a broken link), preventing crawlers from indexing content on page 2, 3, and so forth.

Blogs that rely heavily on infinite scrolling may have difficulty getting content indexed if crawlers are unable to access the content through paginated links.

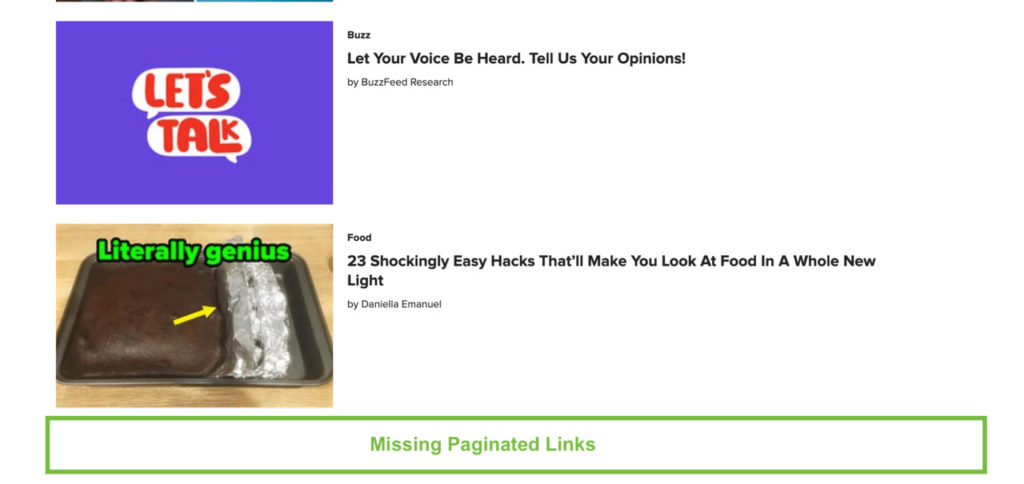

Infinite Scrolling (below)

When JavaScript is disabled, the page lacks paginated links leading to more content.

source: https://www.buzzfeed.com/

How to Make Your JavaScript SEO-Friendly:

- Implement pagination loading to create links where search engine crawlers are led to more content and can index the content.

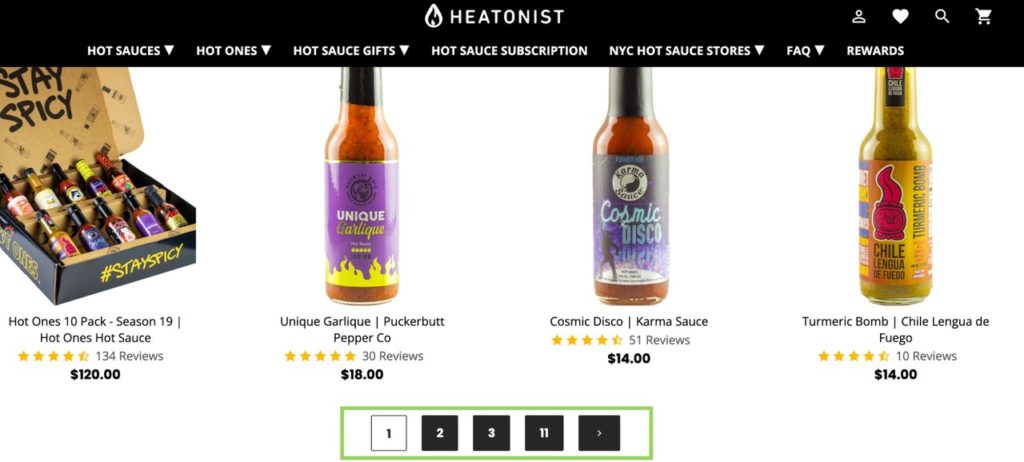

- A great example of this is Heatonist.com. On the All Hot Sauces page, infinite scrolling is utilized for easy browsing. However, even when JavaScript is disabled, the page has paginated links for crawlers to index.

source: https://heatonist.com/collections/all-hot-sauces

3. Missing or Hidden Content

Another common mishap among many JavaScript sites is invisible content.

Once JavaScript is disabled in the browser, the user may find that content is no longer visible on the page, which can hinder a site’s organic visibility since the crawler is unable to see it.

This also applies to content hidden under accordion-style or expansion buttons, which crawlers cannot click and detect.

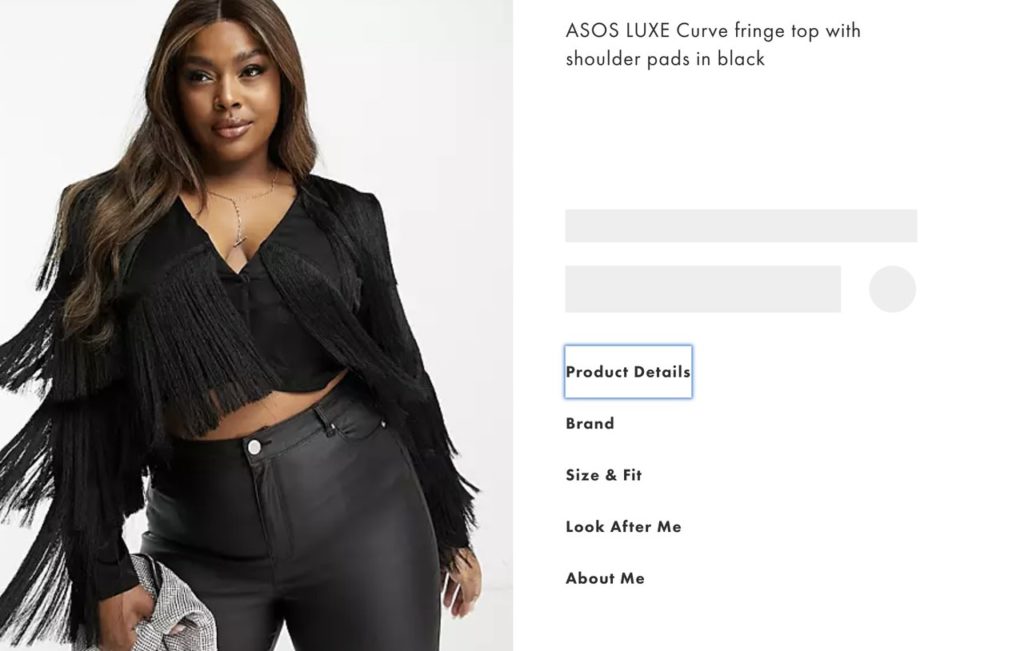

For ecommerce websites, this presents problematic obstacles if crawlers cannot read product descriptions and assume your product pages have thin content.

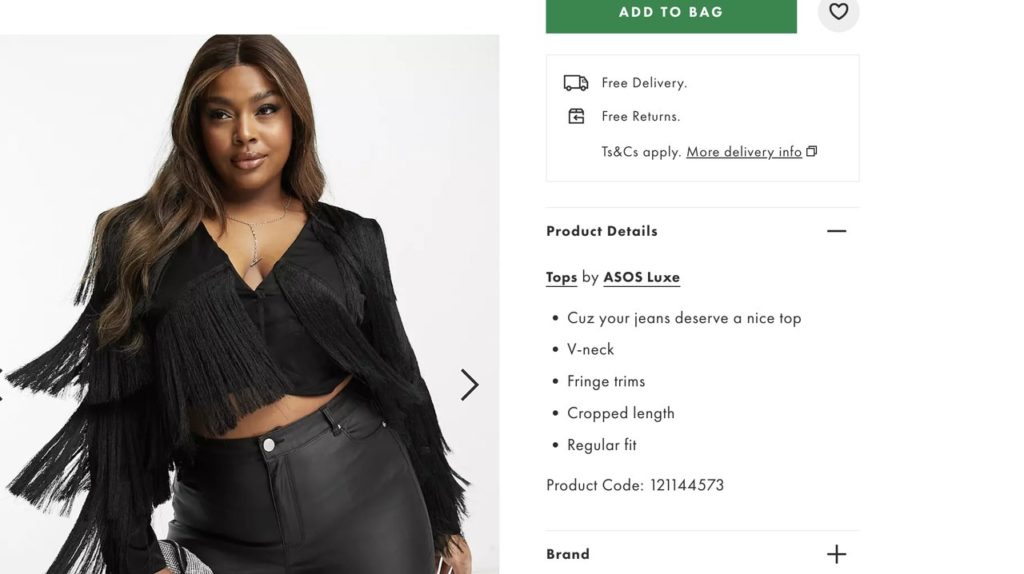

Visible content with JavaScript

When JavaScript is disabled, content is missing.

source: https://www.asos.com/us/asos-luxe/asos-luxe-curve-fringe-top-with-shoulder-pads-in-black/prd/203435874?clr=black&colourWayId=203435891&cid=30058

How to Make Your JavaScript SEO-Friendly

There are a few different ways you can handle missing and hidden content on your site:

- Pre-rendering: Create a static HTML version of the page and serve this to the client and search engine crawlers. This will make all content visible to the crawler in HTML format, supporting indexation and keyword ranking efforts.

- For content hidden under accordion and expansion buttons, you could also move the content to under a visible element in HTML format.

4. Internal Link Errors

Internal links errors are also a frequent issue on sites utilizing JavaScript frameworks such as Angular, Vue, and React.

Although these frameworks make web development much easier, it can create major headaches when internal links are injected without the proper elements, making links uncrawlable for search engines.

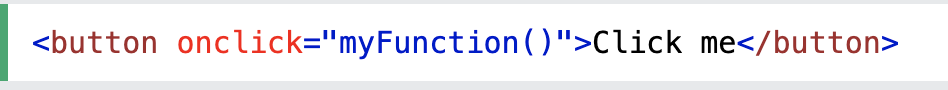

In many cases within JavaScript frameworks, internal links are created using onclick events rather than <a> tag elements.

For example, this onclick event:

source: https://www.w3schools.com/jsref/event_onclick.asp

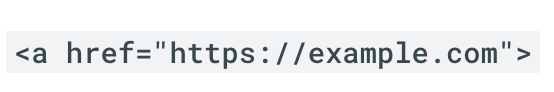

Rather than an internal link with an <a> tag within an href attribute:

source: https://developers.google.com/search/docs/crawling-indexing/links-crawlable

According to Google’s Search Central documentation,

“Google can follow links only if they are an <a> tag with an href attribute. Links that use other formats won’t be followed by Google’s crawlers. Google cannot follow <a> links without an href attribute or other tags that perform a links because of script events.”

How to Make Your JavaScript SEO-Friendly:

- Create internal links using only resolvable URLs, with <a> tags within href attributes instead of onclick handlers.

Common JavaScript SEO Site Speed Issues

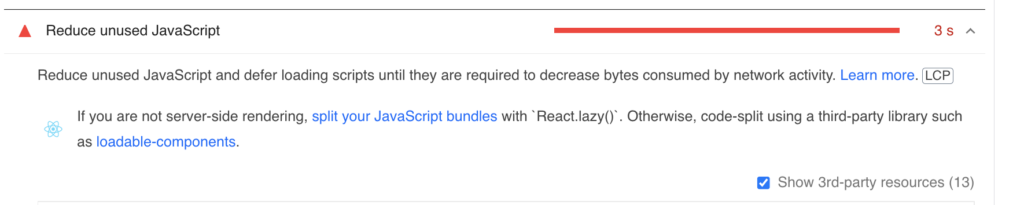

5. Heavy or Unused JS Files

With the introduction of Core Web Vitals, Google has placed more weight in site speed performance.

If your website is slow to load, it can negatively impact your search engine rankings as well as user experience.

Both large or unused JavaScript files can weigh down your site, slowing down site speed performance by eating up bandwidth that could be allocated to other resources.

Since browsers need to render all JavaScript files beforehand, the parsing process can take longer if the JavaScript file is larger.

Tools such as Google’s Pagespeed Insights (or Lighthouse) will flag unused JavaScript files that are larger than 20 kibibytes, and any large JavaScript files that can be optimized.

source: https://pagespeed.web.dev/

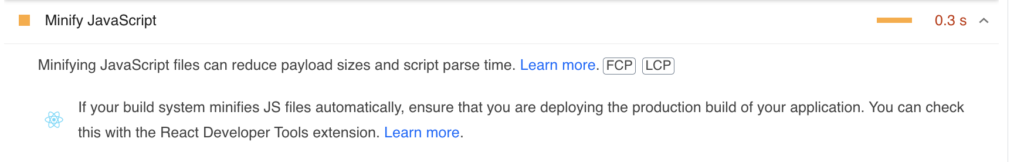

How to Make Your JavaScript SEO-Friendly:

- Remove any unused JavaScript files. To find unused scripts, open Google’s DevTools and check the Coverage tab as illustrated in these directions.

- Use minifying tools, extensions, or plugins such as Terser to reduce the size of large JavaScript files

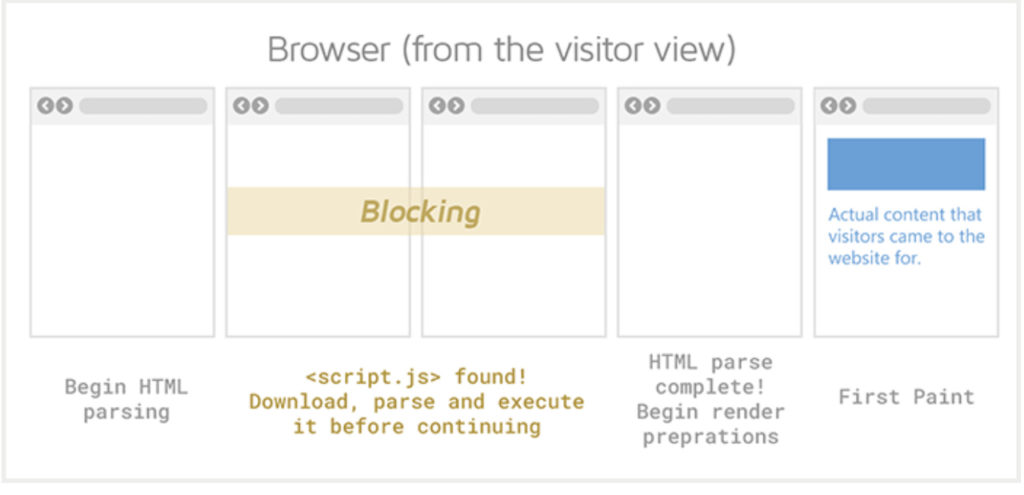

6. Site Speed Issues: Render-Blocking Resources

Render-blocking resources, such as JavaScript or CSS files, slow down site speed performance and can prevent Google from reading and crawling a page.

When your browser makes a request to load a webpage, the browser also downloads all the resources for you to view the webpage properly, including large JavaScript and CSS files that may be located in the <head>.

This process can block the browser from quickly rendering the page as it allocates bandwidth and time to download files. Once all files are downloaded, your browser can then render and display the full webpage.

Because this process takes time and bandwidth, it slows down site speed performance, which can dampen your site’s Core Web Vital metrics and rankings.

source: https://gtmetrix.com/eliminate-render-blocking-resources.html

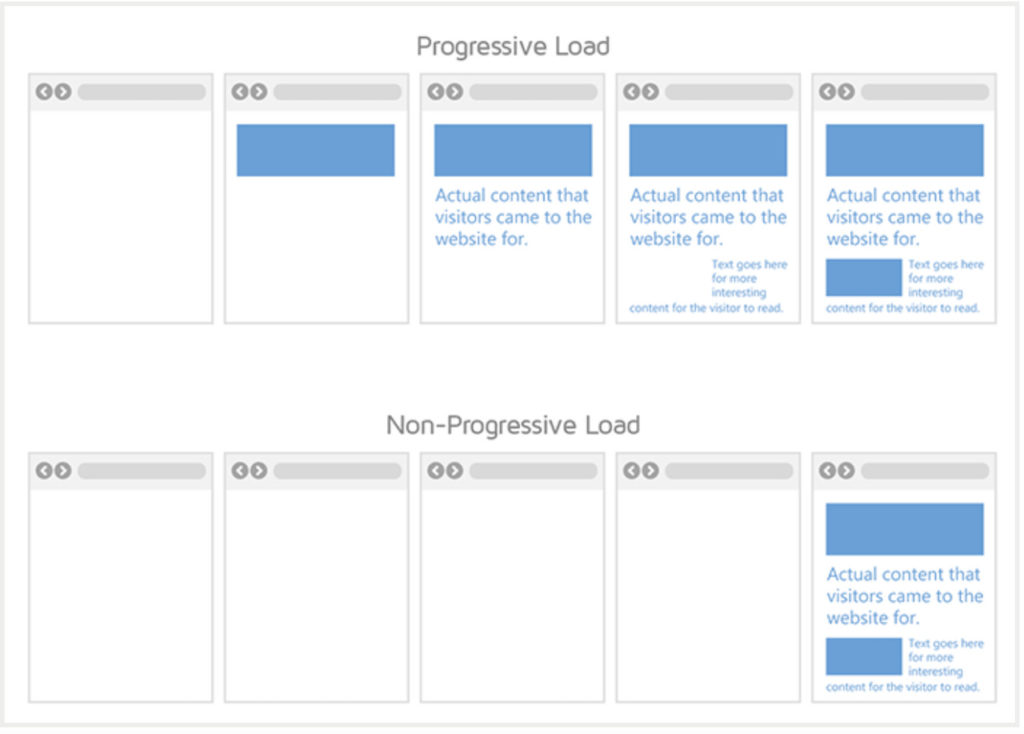

How to Make Your JavaScript SEO Friendly

- Defer all noncritical resources, such as unused JavaScript files, below the fold (out of the <head>) and towards the closing <body> tag.

- For all critical resources, inline the script within the body above the fold, so that only critical elements load first.

- This will encourage progressive loading for your page (see above image), which can reduce lagging in site speed performance and improve user experience.

Only the Tip of the Iceberg for JavaScript SEO

JavaScript is an impressive and dynamic tool enhancing website performance and user engagement. However, it continues to present challenges for SEO performance, including indexation, crawlability, site speed, and content visibility.

Although these six issues are common for many sites built with JavaScript frameworks, it’s only the tip of the iceberg when it comes to examining the deeply complex relationship between web development and SEO performance.